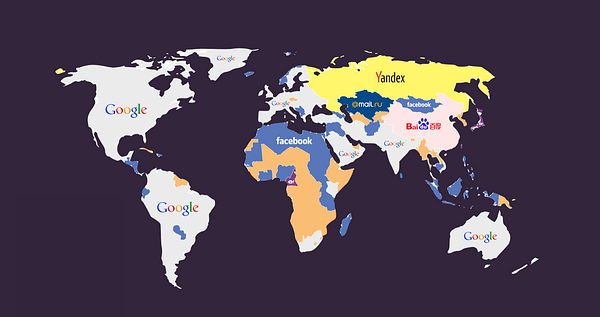

On the risks and opportunities of Mark Zuckerberg’s plan for a global community.

November 12, 2015: Facebook announces a new feature by having Mark Zuckerberg post on his personal feed the first 360 Virtual Reality video in his social network. The feature itself allows any smartphone owner watch an immersive video where you can pan around your phone as if you were actually there. But what was really interesting about this feature announcement was the content in the video itself: it was a military parade held in North Korea 🇰🇵 right at the moment when crowds cheer as a big poster featuring Kim Jong Un entered in scene.

Deploying unknown technology just a few feet away from authority to capture images under the most secrecy-jealous government in the world (one that doesn’t even allow tourists to take pictures without authorization), certainly feels as flipping the bird to an autocratic dictatorial regime. How would the Great Leader react to such a provocation?

January 6, 2016 (8 weeks later): North Korea held its first nuclear test after three years claiming they have initiated the testing phase for a thermonuclear weapon considered among the most dangerous known to mankind: the H-Bomb. Specialists from China & the US argued that the measurements for the seismic event felt in North Korean territory during the test was of a magnitude of 5.1, insufficient for an H-Bomb but compatible with a 9 kiloton atomic bomb nonetheless.

Kim Jong Un and Mark Zuckerberg have in common more things than just the fact that they wear the same outfit for work everyday: they’re both millennials amassing unprecedented power in a perfect juxtaposition of styles. While one aims to split the atom in order to instill fear, the other one multiplies the bits to anticipate behavior. The dialectical relation between force and intelligence. Their respective empires are the perfect example of the clash between matter and information: the land and the cloud.

It’s been years since most of us last thought of Facebook as a disruptive startup. The unapologetic brilliant hacker that Mark Zuckerberg once was, now tours the United States to rethink the role of Facebook in the formation of what he calls a global community by meeting with political and industry leaders while running a corporation that has become much more than another site on the web.

Recently, Zuckerberg outlined his vision for the company in a long, quite controversial manifesto. The text emerges in a special context: Facebook is accused of hosting an epidemic of fake news due to blind algorithms that got played with smart use of data that targeted every demographic in America with the perfect fake article on either Clinton or Trump, benefitting the latter. A shadow strategy that certainly helped elect as president a man who seems determinate to take measures to make the world less “open and connected”.

The whole 6,000 words manifesto is pretty much summarized here:

“History is the story of how we’ve learned to come together in ever greater numbers — from tribes to cities to nations. At each step, we built social infrastructure like communities, media and governments to empower us to achieve things we couldn’t on our own. Today we are close to taking our next step.”

That next step is obviously Facebook, where 1.8 billion people gather every day producing the largest group under a centralized administration in history.

Mark Zuckerberg insists in talking about his company as “social infrastructure” for the global community, meaning he intends to replace many existing institutional roles — like preventing crime and suicide or finding terrorists — with a single platform. Beyond Zuckerberg’s curious and ambiguous use of the trendy term infrastructure, we highlight the important dimensions in which these instruments of community-shaping could endanger our freedom and personal sovereignty.

Facebook as government means Facebook as the police.

We must take into consideration that Facebook is building a global community run by a platform that among other features has, by design:

- Facial recognition.

- Access to your private chats.

- Knowledge of who you privately stalk while browsing their site.

- Records of who went to which event (and with whom).

- Ability to censor and curate information (even without users realizing it: shadow banned content can’t be detected).

- Proliferation of fake news & filter bubbles.

- A record of experimenting with user’s emotions and more means for surveillance than we can know of.

Many human rights defenders are starting to become aware and they already have a hard time fighting Facebook’s current law enforcement rules. ACLU and other organizations recently sent the company a letter stating:

We are deeply concerned with the recent cases of Facebook censoring human rights documentation, particularly content that depicts police violence.

And Facebook has been quick to react:

Profiling is an ability Facebook has due to the tons of data captured from its users as they develop a company that has 97% of its revenue coming from advertising. By tracking behavior and operating as a digital passport on the web, Facebook optimizes the messaging from those organizations that invest their money on shaping desire and opinion. And although engineers working at Facebook’s big data team are forbidden to make queries to their internal database connecting more than two data points (they will get immediately get fired according to their contract), it is still a byproduct of Facebook’s attention farm the possibility of generating racial, religious and ideological registries even without willing to pursue such goal.

To understand code is law is also to understand the need to prevent code from becoming the law, because a program can’t be bargained with. It can’t be reasoned with. It doesn’t feel pity, or remorse, or fear. An automated system might seem like a good substitute for corruptible, fallible human beings, but — as the story of IBM helping Nazi Germany with the registry of Jews during the Holocaust— has also proven the most effective way to bring about massive torture and murders. In simpler words: to leave security and law enforcement duties to Facebook’s algorithms can be dangerous.

Achieving Personal Sovereignty.

Sir Tim Berners-Lee, known for having invented the world wide web, has recently stated the top three most pressing issues he considers need to be addressed in order to keep the internet open and free:

- “We’ve lost control of our personal data”

- “It’s too easy for misinformation to spread on the web”

- “Political advertising online needs transparency and understanding”

None of this goals can be fixed through the means of traditional software development. There is nothing in the development stack of Facebook that can guarantee the promise of solving these issues because at its core, the problem can be described with a single concept: data centralization. The very same strategy used by autocratic regimes Hence, the rise of new protocols of trust like blockchains that can operate under decentralized information networks can guarantee users that no single corporation or entity is acting as big brother.

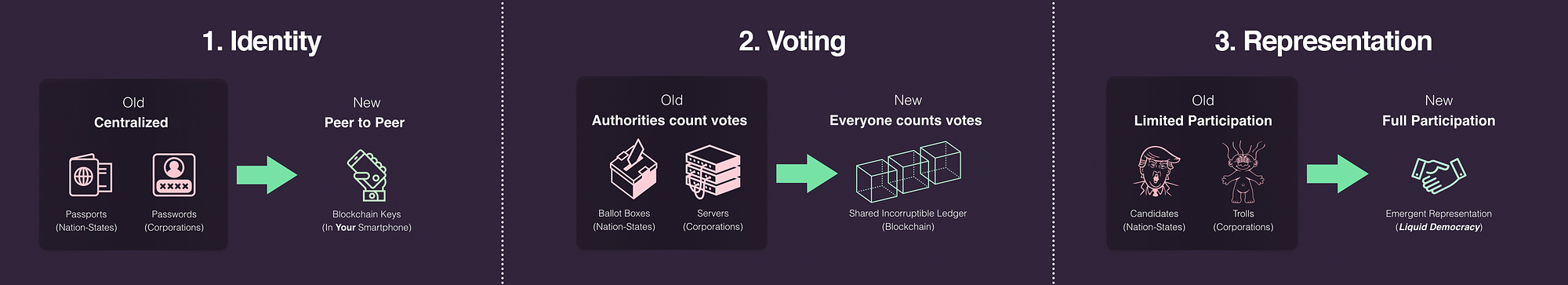

When abstracted, the main interactions we have on a daily basis with Facebook are exactly the same ones that we have with our government: Identity, voting (liking) and representation. What happens if we settle these interactions on a public decentralized information architecture such as a blockchain?

At Democracy Earth Foundation we believe that what open source did for software, blockchains will do for institutions. The interactions that are now held inside the walled (feudal?) garden of Facebook, as they become a more relevant geopolitical factor driving modern society, need to migrate towards a public commons that shall aim to include every voice in the planet while guaranteeing algorithmic accountability. When software becomes decentralized, sovereignty is what gets transferred to the users. And sovereign software needs to be built in the open in direct collaboration with the pioneering technologies that hold the promise of making any wall, obsolete.

Join us. (Democracy.Earth)